Development and Preliminary Evaluation of an Instrument to Measure Readiness for Problem-Based Learning

Article information

Abstract

Purpose

This study was conducted to develop and provide a preliminary evaluation of an instrument to measure students’ readiness for Problem-Based Learning (PBL). The instrument assessed students’ readiness for four main aspects of the PBL environment: problem-solving, self-directed learning, collaborative learning, and critical thinking.

Methods

The Readiness for PBL (RPBL) instrument was trialed in two polytechnics in Singapore. Exploratory and confirmatory factor analyses, as well as internal consistencies, were used to explore the internal structure of the instrument.

Results

The results of the study confirmed that the internal structure of the instrument was tenable within the cohorts that participated in the study.

Conclusions

The results of this study suggest that the RPBL may provide a practical means by which students’ readiness for PBL may be assessed, with the goal of enhancing the benefits of PBL environments for these students.

INTRODUCTION

Since its introduction in 1969 within a Canadian medical school, the use of Problem-Based Learning (PBL) has been growing in popularity. PBL can be defined broadly as a constructivist learning environment in which the focus is on experiential learning, organized around investigation, explanation and resolution of problems (Hmelo-Silver, 2004). In the approach, students learn content and thinking strategies by working on problems in small collaborative groups. A typical PBL session commences with an ill-defined problem. Students must then work together in small groups to address the issues associated with the problem and to address relevant gaps in their own knowledge to arrive at a suitable solution, with a facilitator on hand to guide the students through the process. Apart from the content-related knowledge and skills that students acquire, the process is intended to promote the development of other skills such as critical thinking and problem-solving, as well as both self-directed and collaborative learning competencies (Lieux, 2001; Schmidt, Vermeulen & Van Der Molen, 2006).

Students’ Readiness for PBL Environments

Whilst PBL has accumulated many advocates across education sectors, evidence has appeared which suggests that some students find PBL environments challenging. For example, research has shown that, while many students enjoy PBL and find the approach satisfying (Caplow, Donaldson, Kardash & Hosokawa, 1997;Rideout, et al., 2002), not all students are eager to adopt this approach (Alper, 2008;Hamalainen, 2004; Hood & Chapman, 2011). In one recent study, for example,Fukuzawa, Boyd and Cahn (2017) reported that only 22% of students who had experienced PBL moderately or strongly agreed to a question on whether they would like to attend more PBL class sessions. Furthermore, only 41% of these students indicated that they would like to take another course in which PBL was used. Fukuzawaet et al. also reported that some students’ motivational levels were impacted negatively as a result of being unfamiliar with the PBL process.

Recent research has underscored the importance of students’ readiness for major transitions within their education journeys. In general, the term readiness in an education context refers to the extent to which students enter a given environment with the attributes necessary to engage in, and benefit from, the learning experiences proffered by that environment (see, for example, Kentucky Department of Education, 2019). In a 2013 report by the ACT (ACT Policy Reports, 2013), which focused on students’ readiness for tertiary level studies, they noted that “Many students do not persist in college to degree completion because they are ill-prepared for college and require remedial coursework. Many students also lack the academic behaviors and goals that are needed to succeed in college” (p.2). Various recent studies have confirmed that students’ readiness for specific aspects of post-secondary learning environments are important predictors of the outcomes they achieve in such environments. For example, studies have indicated that readiness factors such as students’ learning preferences, learning approaches and motivation significantly predict their attitudes to, performance in, and satisfaction with, university life in general (e.g., Agherdien, Mey & Poisat, 2018;Wasylkiw, 2016). Readiness has also been found significantly to predict students’ responses to, and performance in, specific types of university learning environments, such as those that rely heavily on information communication technologies (e.g., Tang & Chaw, 2013).

As noted by De Graaff and Kolmost (2003), PBL environments differ from more ‘traditional’ learning environments in various key ways. Amongst these differences, PBL relies heavily on presenting ill-defined problems to students, rather than clearly-defined tasks that have a single correct answer. PBL also places a strong emphasis on students applying critical thinking skills through both self-directed and collaborative learning processes, which may be emphasized variably in more traditional education contexts. Given these characteristics, it is likely that some students will simply be better prepared, or ‘ready’, for PBL environments than others, either because of their own background characteristics, or because of their previous exposure to such methods. This level of readiness may, in turn, account for some of the performance and response variability seen across students when they are first exposed to PBL environments at the post-secondary level.

In light of findings which indicate that students can respond variably to PBL environments, it would be useful for institutions that rely on PBL as a primary teaching and learning approach to be able to assess students’ readiness for different elements of the PBL environment. This would enable the institutions to develop targeted scaffolding interventions to assist any students who are ‘at risk’ of underperforming in these environments to reach their full potential. In a qualitative study by Pepper (2010), for example, it was found that a large number of students did not report enjoying PBL because they felt that they needed to be trained to be effective self-directed learners before being exposed to PBL. Belland, Chan and Hannafin (2013) also asserted that it is fallacious to assume that students will automatically be engaged when faced with authentic, problem-based experiences. Suitable scaffolding is essential to promote high levels of student motivation and engagement in these contexts, with the aim of creating learning spaces that are supportive and conducive for all students. How such scaffolding could be offered by individual institutions is a topic beyond the scope of this paper, but there is an emerging body of literature on effective ways in which this can be done (e.g., Ertmer & Galzewski, 2020).

Key Elements of PBL Environments

As indicated, the PBL process requires students to solve problems collaboratively in small groups, and to address any gaps in their knowledge first through self-directed learning, and then by sharing their findings within their groups. Critical thinking will be a key component of the problem-solving process in any PBL environment. As noted previously, PBL environments thus confront students with a range of experiences with which they may have limited prior experience. In particular, PBL places a heavy emphasis on four key processes, two related to students’ cognitive processes (problem-solving and critical thinking), and two related to their learning processes (self-directed and collaborative learning).

Problem-Solving. In PBL, students are presented with an ill-defined problem and have to work on this using various prescribed methodologies to arrive at a possible solution. Given its emphasis on the use of ill-defined problems, problem-solving in PBL involves students engaging in the steps of deconstructing the problems they are given, defining these in their own words, finding resources to help them address the problems, and testing out their solutions (see Ertmer & Galzewski, 2020).

Critical Thinking. One of the key rationales proffered by some researchers for using PBL is that it is designed to promote critical thinking skills (Maudsley & Strivens, 2000). While definitions of critical thinking vary considerably within the literature, most definitions indicate that this form of thinking involves the disciplined conceptualisation, application, analysis, synthesis, and evaluation of information to reach a solution or conclusion (e.g., Bahr, 2010). Given the emphasis on students responding to ill-defined/structured problems presented in PBL, it is inevitable that some form of critical thinking will be required throughout this experience (Savery, 2006).

Self-Directed Learning. Gibbon (2003) defined self-directed learning as “any increase in knowledge, skill, accomplishment, or personal development that an individual selects and brings about by his or her own efforts using any method in any circumstances at any time” (p.2). Self-directed learning is an essential component of PBL environments, because all students must use self-directed strategies to address the problems presented to them in these environments (Hmelo, Gotterer & Bransford, 1997; Ozuah, Curtis & Stein, 2001).

Collaborative Learning. A fourth key element of PBL is collaborative learning (Savery, 2006). Laal and Laal (2012, p. 1) defined collaborative learning as “an educational approach to teaching and learning that involves groups of learners working together to solve a problem, complete a task, or create a product”. Laal and Laal further identified the following five aspects as critical in collaborative learning: positive interdependence, individual and group accountability, interpersonal and small group skills, face-to- face promotive interaction, and group processing. In PBL, working in small groups is designed to help distribute cognitive load, as well as to build students’ abilities in working as members of teams (Hmelo-Silver, 2004).

Instruments to Measure Readiness for PBL

Despite the potential importance of students’ readiness to engage in PBL environments, the authors were unable to locate any generic instruments designed specifically to assess this construct. This was based on a systematic search of all articles with the keywords “PBL” or “Problem-Based Learning” across nine databases: ERIC, EBSCO, Google Scholar, ProQuest Psychology/Education Database, PsycINFO, Sage Journals online, Wiley online, and A+ Education. A small number of papers have been published in which PBL readiness was a stated study focus, but in these cases, the researchers have focused only on specific elements of the PBL environment (most often, the self-directed learning aspect). For example, Leatemia, Susilo, and van Berkel (2016) sought to identify students’ readiness to engage in a hybrid PBL curriculum, but focused specifically on their preparedness for self-directed learning. Using a combination of quantitative and qualitative methods across five medical schools in Indonesia, they found that only half of the students had a high level of self-directed learning readiness. While results of this kind do affirm that students present with varying levels of skills required for specific elements of PBL, there is a need for a more comprehensive assessment of PBL readiness.

The authors did identify one study that focused specifically on developing an instrument to assess ‘suitability’ for PBL environments. Chamberlain and Searle (2005) sought, in their research, to develop a student selection instrument designed to assess candidate suitability for a PBL curriculum. In the study, a sample of 69 volunteer candidates attending an interview for entry to medical school formed 13 teams of 5 or 6 candidates each. Each candidate was then assessed independently by two assessors. Attributes deemed to be suitable and unsuitable for a PBL environment were then identified and used as the content of the instrument. Suitable behaviours included active listening, summarising, reflection, contributing to and complying with group rules, balancing the task with discussion, and respecting and tolerating varied opinions. Unsuitable behaviours for PBL included prejudice toward other people, depreciative behaviours, demeaning people, not valuing comments, egocentricity, and being domineering. The authors reported that the the instrument demonstrated good item discrimination, the potential for good agreement between raters, strong internal consistency, and good acceptability among candidates. Whilst an instrument focused upon suitability for PBL could be potentially be used as a basis for developing a readiness for PBL instrument, the instrument developed by Chamberlain and Searle was also restricted in its focus, including only attributes related to the teamwork element of PBL.

A third instrument reviewed by the researchers was the PBL Attitude Scale developed for a study in a medical school in Turkey. In the study, Alper (2008) administered a survey to 313 first-year students and 136 second-year students of the Faculty of Medicine, Ankara University. According to the author, the aim of the study was to measure students’ attitudes toward some facets of PBL. These facets were Problem-solving (PS), Self-Directed Learning (SDL), Group-Based Learning / Co-operative Learning (COL), and Facilitator and Web-Supported Environments. The internal consistency of the instrument was reported to be high at 0.86. No psychometric properties other than instrument internal reliability were reported. Again, while an instrument developed to assess students’ attitudes toward PBL could conceivably be adapted to develop a measure of students’ readiness for PBL, this instrument (whilst more comprehensive in its coverage of PBL elements) did not focus upon all four aspects of PBL as discussed previously. In particular, the instrument excluded any consideration of critical thinking, which is one of the four core elements of the PBL approach.

Study Rationale

The research summarized previously suggests that students can exhibit varying degrees of preparedness or readiness to engage in different aspects of PBL, which may produce adverse effects on academic performance or overall learning experiences. Given that many institutions in Singapore now rely heavily on PBL as a learning approach, it would be useful for these institutions to be able to assess students’ readiness for these environments before they are exposed to them. This knowledge would then equip the institutions to offer some form of scaffolding for students who are at risk of underperforming in PBL environments. A review of literature, however, indicated that no comprehensive instruments have yet been developed to assess students’ readiness for PBL. The purpose of this study, therefore, was to develop and provide a preliminary evaluation of an instrument to assess students’ readiness for PBL in a higher education context. It should be noted here that the instrument was not designed to measure students’ attitudes toward PBL (which cannot be assessed before students are exposed to PBL), or their behaviors within PBL (which again cannot be assessed prior to their exposure to PBL), but to provide a means by which students’ readiness for PBL could be assessed, even before they are exposed to it for the first time.

METHOD

Participants and Setting

Participants were students from two polytechnics, A and B, in Singapore. The number of students who participated from polytechnic A was 315 and that from polytechnic B was 310. Polytechnic A students were drawn from a School of Information, whereas those from polytechnic B were from Information Technology and Business schools. Following initial data screening to remove partially completed surveys, as well as instances of clearly disengaged responses (i.e., respondents who put the same rating for every question), the final numbers of respondents from polytechnics A and B were 275 (87.3%) and 227 (73.2%), respectively. The gender distributions were 44.4% males and 55.6% females from polytechnic A, and 40.1% males and 59.9% females from polytechnic B. The age of the respondents ranged between 17 and 25 years for both polytechnics. For Polytechnic A, the mean age was 18.09 years (SD=1.56), and for polytechnic B, the mean age was 18.68 years (SD=1.18). All participants were Asians, with the majority being Singaporeans (88.7% from Polytechnic A and 93.8% from Polytechnic B). The remainder were from the countries of Malaysia, Indonesia, China, and Thailand. In terms of ethnicities, the respondents were mostly Chinese, Indians or Malays (62.5%, 24.7% and 8.3%, respectively, from polytechnic A, and 87.7%, 7.1% and 3.1%, respectively, from polytechnic B).

Polytechnic A students’ learning environment was entirely conducted using the PBL approach for all its full-time diploma programs, whereas polytechnic B’s students had a hybrid learning environment, comprising both traditional instructional methods and PBL. During this survey, students from both polytechnics were engaged in a module conducted in a PBL environment. Both groups of students were undertaking PBL for the first time within their respective institutions.

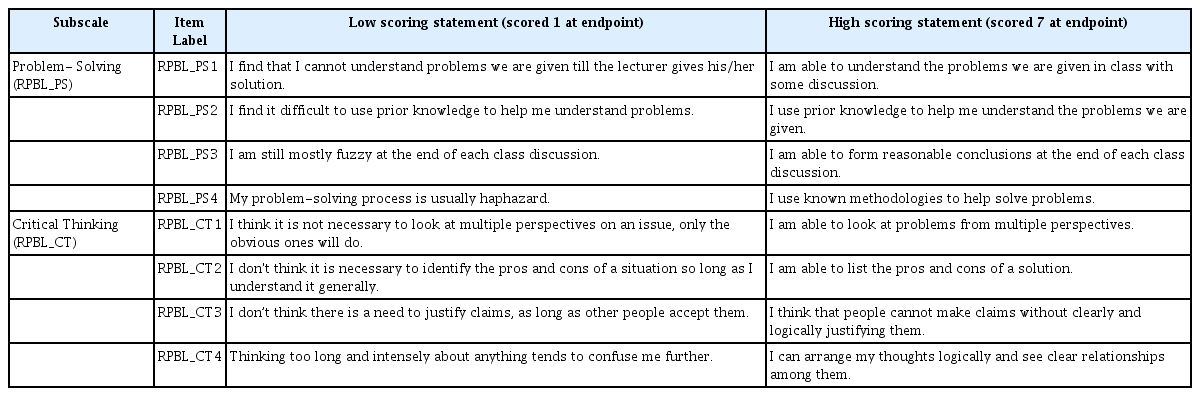

Instrument

The instrument created in this study, the Readiness for PBL (RPBL) instrument, initially included 16 items and utilized a 7-point bipolar statement rating scale. The latter type of scale was chosen for the instrument as bipolar items have been found to reduce acquiescence bias and produce better model fits in instrument analyses (Friborg, Martinussen & Rosenvinge, 2006). Each bipolar item included two full statements to avoid ambiguity and ensure meaningful responses. The instrument developed included two separate components, one focusing on the cognitive processes demanded by PBL environments (problem-solving and critical thinking), and the other focusing on the learning processes associated with PBL environments (self-directed and collaborative learning). These components were included to align with the key components of PBL environments as discussed in the introduction to this paper. Tables 1 and 2 list all items within the full RPBL instrument.

Procedure

Prior to conducting the research, ethics approval was obtained from the researchers’ affiliated institution and approvals were also obtained from the two participating institutions. Participants from polytechnic A were new students starting their freshmen semester, while participants from polytechnic B were second year students. Both sets of participants were enrolled in modules conducted using the PBL approach. No participants had attended courses conducted in PBL within their respective institutions at the start of the semester in which the study was conducted. The survey was administered at the end of the first (13-week) semester for both polytechnics.

The instrument was completed online, hosted on the Qualtrics platform. On the day of the administration, online links were sent to the students via email. This was done during class time. Students were invited to participate in the survey at the end of their classes. They were encouraged to complete the survey in class and in one sitting so as to minimize any potential survey abandonment. To ensure that all participating classes had the opportunity to complete the survey, however, the survey was left open for up to two weeks. No special incentives were offered to respondents to participate in the survey. Students were told that participation in the survey was voluntary and that they can leave the survey at any point in time.

Prior to the actual survey, a time trial was conducted with a small group (n=20) of students who were not part of the main study within polytechnic A. During this trial, it was found that all respondents were able to complete the survey within 10 minutes. This was deemed to be ideal, as a longer survey could result in fatigue, and subsequent loss of data quality.

RESULTS

Both Exploratory Factor Analysis (EFA) and Confirmatory Factor Analysis (CFA) methods were conducted to evaluate the internal structure of the RPBL instrument. First, to provide a preliminary assessment of internal structure, an EFA was conducted on data from the polytechnic A participants. Second, a CFA was used to cross-validate the EFA findings, using data from the polytechnic B participants. IBM SPSS V25 was used to conduct all analyses associated with the EFA, while LISREL V8.80 was used for those associated with the CFA.

RPBL – Cognitive Processes Component

A Maximum Likelihood (ML) extraction was used in the EFA on the Cognitive Processes component scores from polytechnic A participants (n=275), as recommended by Costello and Osbourne (2005). The factors extracted were rotated to approximate simple structure using the Direct Oblimin method, allowing factors to correlate in the rotation. Decisions about the number of factors to retain were made on the basis of three alternative sources of information: Kaiser’s eigenvalues greater than one criterion; the Cattell scree plot; and a parallel analysis of obtained eigenvalues. These three sources were all considered to reduce the possibility of over- or under-extracting factors from the 8-item set.

Prior to conducting the EFA on the RPBL Cognitive Processes component, screening analyses were performed to ensure compliance with all relevant EFA assumptions. Skewness and kurtosis coefficients indicated no significant departures from normality in the item distributions, based on Kline’s (2005) criteria (values below |3.0| for skewness and below |8.0| for kurtosis). Visual examinations of bivariate scatterplots indicated that the relationships between all item score pairs were linear. Using standard (z) scores, no univariate outliers were identified (all z-scores ≤ |3.0|), and Mahalanobis distance χ2 values suggested no significant multivariate outliers at the 0.001 level. Indices of factorability (i.e., the Kaiser-Meyer-Olkin, or KMO, test, and Bartlett's test of sphericity) also indicated that EFA was suitable for use with this score set. With a high case to item ratio of 34.38, the sample used was large enough to yield reliable estimates of correlations among the variables.

The EFA indicated that either a one or a two-factor model would be tenable in describing the latent structure of the RPBL Cognitive Processes component. While the parallel analysis suggested retaining only one factor (difference between the second random eigenvalue and the second obtained eigenvalue -.14), the scree plot suggested two factors, with the plot of eigenvalues flattening distinctly beyond two factors. The eigenvalue for the second factor also fell just above Kaiser’s eigenvalue greater than one criterion (1.00). Given these results, either a one- or a two-factor model was deemed to be plausible in the EFA, and the CFA on polytechnic B participants was used to compare the one- and two-factor models directly. For the one-factor model, the single extracted factor accounted for 56.82% of the total item variance. For the two factor model, together, the two factors obtained accounted for 67.37% of the total item variance (54.85 and 12.52% for factors 1 and 2, respectively).

Communalities and oblique-rotated factor loadings obtained in the EFA based on the two-factor model are shown in Table 3. Based on the pattern coefficients, item loadings across the two factors were consistent with the proposal that the Cognitive Processes component of the RPBL instrument measured readiness for two different aspects of PBL environments: problem-solving and critical thinking. Despite the fact that the parallel analysis suggested that only one factor be retained, as indicated in Table 3, the factor-item loadings indicate that each item loaded strongly on one of the two factors, with minimal cross-loadings on the other factor. All RPBL_PS items loaded into one factor, while RPBL_CT items loaded into another. The Cronbach αs for the Problem-Solving and Critical Thinking subscales were also high at 0.85 and 0.81, respectively.

Rotated factor loadings for items within the Cognitive Processes component of the RPBL instrument (polytechnic A, n=275)

Given that either a one- or two-factor model could be justified from the EFA, however, both models were tested in the CFA performed on data from polytechnic B (n = 227). The first was based on the original two-factor structure specified, in which the eight RPBL Cognitive Processes items measured students’ readiness for two aspects of PBL (problem-solving and critical thinking). Given the results of the parallel analysis obtained for the EFA, however, a second one-factor model was also performed, in which all eight items from this RPBL component contributed to a single factor. The change in χ2 between the two models was then used to evaluate whether the fit of the two models differed significantly. Again, prior to this analysis, data screening analyses were performed to ensure that all relevant assumptions for CFA in terms of normality, linearity, factorability, and the absence of outlying univariate scores/multivariate score sets were met. These analyses all produced satisfactory results. Given the high case to item ratio of 28.38, the sample size was also deemed large enough to yield reliable correlation estimates.

Despite the results of the parallel analysis obtained from the EFA, the change in χ2 between the one- and the two-factor models tested was significant, ∆χ2(1) = 196.34, p < 0.05, indicating that the fit of the original two-factor model was superior to that of the one-factor model. Given this result, the two-factor model was retained for interpretation. Comparing the obtained fit indices obtained with recommended cut-offs for each index (see Hooper, Coughlan & Mullen, 2008), the two-factor model fit the data well. The Goodness of Fit (GFI) and Adjusted Goodness of Fit (AGFI) values were 0.97 and 0.92, respectively, indicating that the proportion of variance accounted for by the estimated population covariance was well within the recommended minimum level (GFI ≥ 0.95 and AGFI ≥ 0.90). The Normed Fit Index (NFI) and Non-Normed Fit Index (NNFI) values for the two factor model (0.94 and 0.94, respectively) fell only marginally below typical recommended levels (i.e. 0.95, or improvement of fit by 95% relative to the null model). Values obtained for the Root Mean Square Error of Approximation (RSMEA), indicating the square-root of the difference between the residuals of the sample covariance matrix and the hypothesized model, and for the Standardized Root Mean Square Residual (SRMR), indicating the standardized difference between the observed correlations and the predicted correlation values, were 0.08 and 0.04, which also fell well within recommended levels (< 0.08 for both). The Comparative Fit Index (CFI) of 0.99 was also well within recommended levels (≥ 0.95). Based on these results and those from the EFA, the internal structure of items within the cognitive processes component of the RPBL based on a two-factor structure appeared to be sound.

RPBL – Learning Processes Component

Again, an EFA was used to obtain a preliminary assessment of the internal structure of the RPBL Learning Processes component, based on a ML extraction procedure with Direct Oblimin rotation. Again, preliminary data screenings indicated no notable deviations from EFA assumptions within this dataset, suggesting that the use of this approach was tenable for these data. The initial EFA indicated three factors within this component of the RPBL, based on the scree plot and Kaisers’ eigenvalues greater than one criterion. An examination of the item loadings produced by this EFA indicated that one item (RPBL_SDL3: “In the classroom, I expect the teacher to tell us exactly what we are expected to do” vs. “I usually do not need to be told exactly what is expected of me”) did not load together with any other items, forming a third factor in itself. The initial communality for this item was also low (0.11). Following the removal of RPBL_SDL3, the EFA indicated two distinct factors based on the scree plot, Kaiser’s criterion, and the parallel analysis (-0.29 between the obtained and randomly generated eigenvalues for the third factor). These accounted respectively for 31.16 and 22.44% of the total item variance (53.60% collectively).

Communalities and oblique-rotated loadings for the RPBL Learning Processes

Component are shown in Table 4. Based on the pattern matrix, the item loadings across the two factors were consistent with the proposal that the items measured two learning processes associated with PBL environments: self-directed and collaborative learning. The Cronbach’s α for the self-directed learning subscale (RPBL_SDL) was 0.58, while for the collaborative learning subscale (RPBL_CL), it was 0.65. Thus, the internal consistencies for this component of the RPBL were somewhat lower than for the Cognitive Processes component. This result suggests that these two subscales were factorially more complex than were the two Cognitive Processes subscales. The results of the EFA, however, aligned well with the proposed structure of the RPBL Learning Processes component.

Communalities and rotated factor loadings for items within the Learning Processes component of the RPBL instrument (polytechnic A, n=275)

Given the results of the EFA on polytechnic A, for the CFA on scores from polytechnic B, the model tested for the RPBL Learning Processes component excluded one of the four SDL items (RPBL_SDL3), leaving a total of seven items within this component. The initial CFA on scores for polytechnic B indicated that the two-factor model proposed did not fit the data well, based on accepted cut-points for CFA fit indices (see Hooper, Coughlan & Mullen, 2008). The overall chi-square obtained for the initial model was χ2(13) = 96.59, p < .05 (χ2/df = 7.43). The GFI and AGFI values were 0.89 and 0.77, respectively, which fell below recommended minimum levels (GFI ≥ 0.95 and AGFI ≥ 0.90). The NFI and NNFI values (0.78 and 0.67, respectively) also fell short of recommended levels (≥ 0.95 for both), as did the RSMEA and SRMR values (obtained values 0.17 and 0.14, with recommended levels of 0.08), and the CFI value (0.80). Based on the modification indices obtained, paths were then added between the latent factor RPBL_SDL and two items from the RPBL_CL subscale (RPBL_CL2 and RPBL_CL4). With this modification made, the model fit to the data was excellent, with χ2(13) = 26.06, p < .05 (χ2/df = 2.37), NFI/NNFI = 0.94, GFI = 0.97, AGFI = 0.92, RSMEA = 0.08, SRMR = 0.04, and CFI=0.97. Based on these results, while the CFA supported the internal structure of the RPBL Learning Processes component, it was clear that two items from the RPBL_CL subscale did exhibit significant cross-loadings with the RPBL_SDL subscale.

DISCUSSION

Despite evidence to suggest that students’ readiness to engage with various types of learning environments is an important predictor of their learning outcomes, there remains a scarcity of validated instruments which focus on assessing students’ readiness to engage in PBL contexts to date. Results of this preliminary evaluation suggest that the RPBL instrument developed may serve to address this gap. These results indicated that the internal structure of the Cognitive Processes component of the RPBL was aligned with the proposed theoretical structure. Loadings from the EFA performed on polytechnic A data indicated that each item from this component loaded strongly upon its respective proposed factor, and minimally with the other factor. Internal consistencies from this sample for both subscales were also high. The CFA using data from polytechnic B also supported the internal structure of this component, indicating excellent fit of the proposed two-factor model to the item data.

The evaluation of the internal structure of the Learning Processes component was also generally favourable. Initially, loadings from the EFA performed on polytechnic A student data indicated one item within the RPBL_SDL subscale that did not load with any other items in that factor. Following removal of this item, all other items loaded strongly upon their respective proposed factors, and minimally with the other factor within the component. Internal consistencies for this sample, however, were relatively low, both for the collaborative learning subscale (RPBL_CL, 0.65) and for the self-directed learning subscale (RPBL_SDL, 0.58). These results suggest that the items within each of the subscales were not entirely consistent in what they measured. Furthermore, the initial CFA using data from polytechnic B did not fit the data well. The fit was excellent, however, when two items from the collaborative learning subscale (RPBL_CL) were allowed to cross-load with the self-directed learning subscale (RPBL_SDL). Thus, while the results broadly supported the internal structure of this component of the RPBL instrument, revisions of the items within the Learning Processes component could enhance the performance of these subscales further. This represents a potential avenue for further research on the instrument.

The evaluation of the RPBL presented in this paper focused only on the internal structure of the instrument. Based on Messick’s framework of validity assessment (Messick, 1995) and published AERA, APA, and NCME guidelines, various further steps can be incorporated to “function as a general validity criteria or standards for all educational and psychological measurement” (Messick, 1995, p. 741). Thus, whilst the internal structure of the RPBL instrument was evaluated in this study, future research is needed to provide evidence of its construct validity based on: (i) the test content (e.g., having the instrument reviewed by a panel of experts in the field of PBL); (ii) participants’ response processes (e.g. conducting “think aloud” protocols while respondents are completing the instrument); (iii) relationships between the instrument and other, theoretically related variables (e.g., convergent, discriminant and criterion-related correlational validity studies); and (iv) the consequences of using the instrument in ‘real world’ settings (e.g., exploring positive and negative consequences that result from using the instrument in practice). All of these studies were beyond the scope of the research reported here. Such studies can be conducted in the longer term, to establish whether the use of the RPBL instrument does indeed provide data that institutions can use to enhance students’ experiences within PBL environments.

CONCLUSIONS

With many institutions in Singapore now making use of PBL, it is important for students’ readiness for these environments to be assessed. Efforts to achieve this goal may, however, be hampered by a scarcity of suitable instruments for this purpose. The results of the present study suggest that, while further validation efforts would be beneficial, the RPBL holds considerable promise for meeting this important need.